Optimal Spatial Sampling via Information Theory

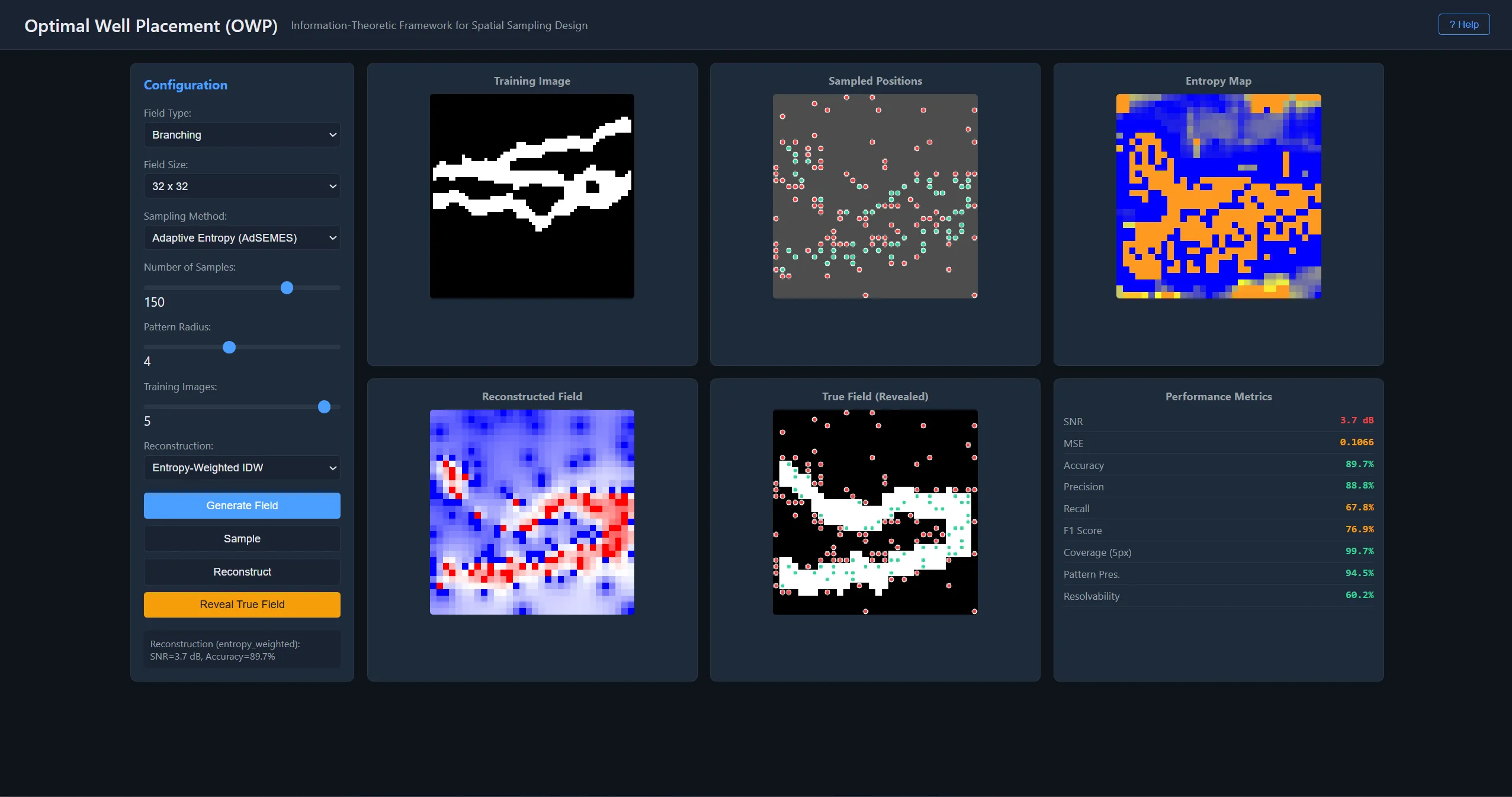

Implements the AdSEMES algorithm for optimal spatial sampling in binary random fields — specifically channelized geological reservoirs. Companion code to published papers in Mathematical Geosciences and Natural Resources Research.

Business Context

In mineral exploration and geostatistics, every drill hole or sensor placement costs significant time and money. The question of where to place the next measurement to maximize geological information is a combinatorial optimization problem with C(H×W, K) possible configurations — computationally intractable for real-world field sizes.

Strategic Value

This implementation of the AdSEMES algorithm provides optimal spatial sampling with a provable (1-1/e) ≈ 63.2% approximation guarantee via submodularity. Six sampling strategies are compared systematically (random, stratified, multiscale, oracle, adaptive entropy, regular grid) with spatial penalty functions preventing measurement clustering. Three reconstruction methods (nearest neighbor, indicator kriging, entropy-weighted inverse distance) recover the full field from sparse observations. Companion code to published papers in Mathematical Geosciences and Natural Resources Research. Developed under Fondecyt Grant 1140840.

The Challenge

Given K measurements on an H×W binary field, minimize posterior uncertainty. The combinatorial search over C(H×W, K) candidates is NP-hard, requiring efficient approximation with provable quality bounds.

Our Approach

Greedy sequential selection exploiting submodularity achieves 63.2% approximation guarantee. Compares six sampling strategies (random, stratified, multiscale, oracle, adaptive entropy, regular grid) with spatial penalty functions. Three reconstruction methods: nearest neighbor, indicator kriging, entropy-weighted inverse-distance.

Key Performance Indicators

| KPI | Baseline | Result | Impact |

|---|---|---|---|

| Sampling Strategies | Regular grid or random | 6 strategies with entropy optimization | Information-theoretic placement |

| Reconstruction | Single method | 3 methods compared systematically | Best method per scenario |

Architecture

ids owp

The Combinatorial Explosion

Given K measurements on an H×W binary random field, the number of possible placement configurations is C(H×W, K). For a 50×50 field with 20 measurements: over 10²⁶ possibilities. Exhaustive search is NP-hard. And traditional approaches — regular grids, random placement — ignore spatial information content entirely.

Six Strategies Compared

The system implements six fundamentally different approaches: random placement (baseline), stratified sampling (random within grid cells), multiscale refinement (coarse to fine), an oracle using true field knowledge (theoretical upper bound), the AdSEMES entropy maximization method, and regular grid spacing (standard practice).

Each strategy can incorporate spatial penalty functions that discourage clustering — important because entropy-optimal placement can concentrate measurements in high-uncertainty regions at the expense of spatial coverage.

Three reconstruction methods complete the pipeline: nearest neighbor (fast, no assumptions), indicator kriging (optimal under stationarity), and entropy-weighted inverse distance (hybrid approach weighting by both proximity and information content).

The Submodularity Guarantee

Entropy maximization satisfies submodularity: the marginal information gain from adding a measurement decreases as the existing set grows. This mathematical property guarantees the greedy sequential algorithm achieves at least (1 - 1/e) ≈ 63.2% of the globally optimal information gain. This is a provable bound, not an empirical observation — the greedy solution is certifiably close to optimal despite the combinatorial explosion of the search space.

Developed at the IDS Group, Universidad de Chile (2014–2016), under Fondecyt Grant 1140840.

Technology Stack

Application Screenshots

Technical Diagrams

owp adsemes

owp information theory

owp resolvability

owp sampling comparison